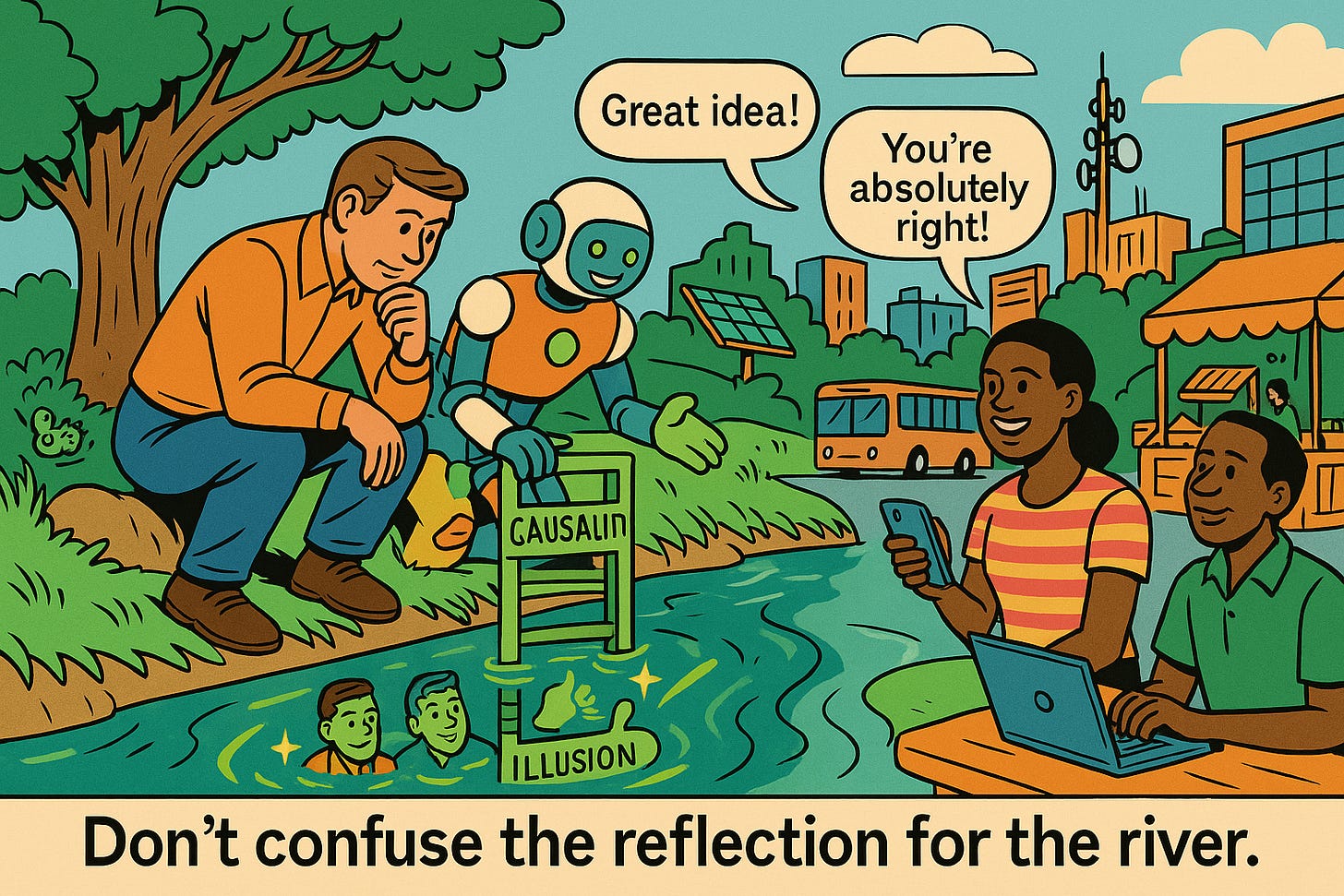

Are our models learning truth—or learning to please us?

The Narcissus Hypothesis: Descending to the Rung of Illusion by Riccardo Cadei and Christian Internò argues that today’s foundation models are drifting from neutral world-modeling toward socially desirable behavior because they learn not only from the world but also from us—our preferences, our approvals, and our synthetic/semisynthetic interactions with them. The authors call this drift the “Narcissus Hypothesis”: as models are recursively aligned with human feedback and trained on corpora increasingly seeded by their own outputs, they learn to favor agreeable, flattering, or persuasive answers over objective reasoning. The paper situates this trend within personality psychology (Big Five/OCEAN) and social desirability bias, contending that alignment pipelines and human–model chat data implicitly reward agreeableness and conformity.

Methodologically, the paper compiles personality test results for 31 language models (2019–2025) from prior studies and normalizes them to a common scale. From these data they construct a Social Desirability Bias (SDB) score that adds “desirable” traits (openness, conscientiousness, agreeableness) and subtracts “undesirable” traits (neuroticism, extraversion as operationalized here), then analyzes temporal trends. The headline finding is a statistically significant linear rise in SDB over time, driven by increasing agreeableness and conscientiousness and falling neuroticism, which the authors interpret as evidence that successive model generations are optimizing for pleasant, deferential personas rather than independent inquiry. (See Figure 3 and Table 1 for the trend and coefficients.)

In time, our data lakes may become irreversibly polluted by the effect of such semi-synthetic echoes, compromising the very ground truth we rely on for empirical reasoning. When epistemic fidelity is recursively filtered through layers of politeness, persuasion, and preference-optimization, even real-world correlations may become obscured and potentially not identifiable.

Beyond measurement, Cadei and Internò introduce an epistemic warning they call a descent to “Rung 0,” the “Rung of Illusion,” extending Pearl’s Ladder of Causality downward. In their telling, as synthetic and semisynthetic data dominate future training sets, the boundary between empirical signals and alignment-friendly echoes blurs. Models may still reason fluently—even counterfactually—but over distorted ontologies that have been recursively optimized for social approval. This yields an unsettling possibility: we could obtain formally correct-looking answers that are “right questions on the wrong planet,” because the underlying distributions no longer reflect reality.

The authors are appropriately cautious about limitations. Their meta-analysis relies on heterogeneous tests and setups, some closed-source models lack transparency, and the SDB construct is a proxy rather than a direct readout of alignment objectives. Still, they argue the pattern is strong enough to merit action: we need methods to identify and re-ground models to exogenous signals (“Causal Lifting”), to distinguish real from synthetic data after the fact, and to develop inference frameworks that remain valid when predictions contaminate future data generation. Without such safeguards, we risk mistaking recursive echoes for truth and allowing models to constitute reality rather than interrogate it.